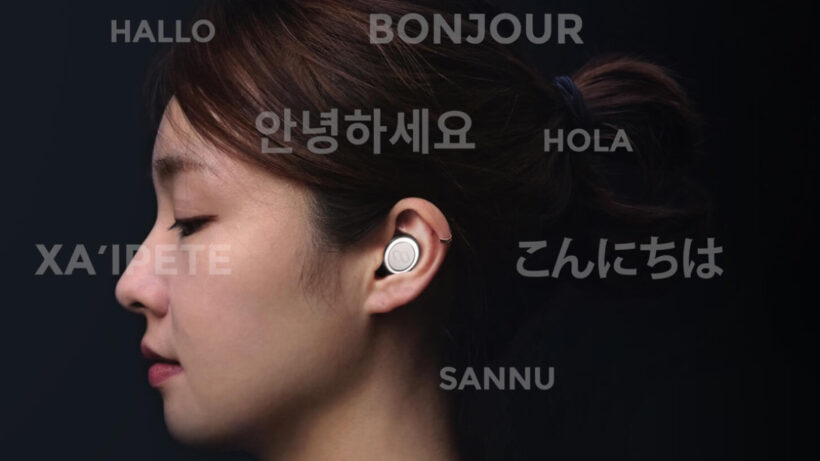

Can hearables ever achieve perfect live language translation?

Over the last few years we have been repeatedly teased about the prospect of hearables that can break down language barriers in discreet and elegant ways. Google’s Pixel Buds, Waverly Labs’ Pilot and former major hearable player Bragi all brought live translation powers in their own different ways.

In all of those examples that promise of live translation reaching the gold standard of a human interpreter hasn’t truly been realised – but this doesn’t mean the products aren’t working well. Some are helping millions of travellers and businesses crash through age-old language barriers. Whether it’s ordering off a menu, finding directions, or opening a new trade route, these solutions are already good enough to make a massive difference.

Read this: Best hearables and smart wireless earbuds to buy

However, there are still plenty of limitations. The current hearable solutions are very dependent on companion smartphone hardware and an app interface as the go-between. Language processing and conversion mostly takes place in the cloud, which means lag. Voice recognition services still underperform when subjected to anything but the Queen’s finest, and personal assistants don’t understand the tone and emphasis that give our speech context and meaning. Also, the microphones within the hardware suck at isolating individual voices in noisy environments.

With these challenges, is it even possible to create a real world Babelfish from The Hitchhiker’s Guide To The Galaxy? Or will the human interpreter have a job for life? We spoke to key leaders and enthusiasts in the hearable tech space to find out.

Searching for a hearable hero

Dave Kemp runs the blog Future Ear and is business development manager for Oaktree Products – a supplier of clinical supplies and assistive listening devices to the hearing care industry. He believes we’re well on the way to an incredible breakthrough in the language translation space and Google will be the company responsible.

“The thing that excites me is that the underlying technology to make that all possible is approaching a point where it’s actually feasible, if it isn’t already,” he told Wareable.

“To have real time language translation you need to have intelligent speech recognition and have it intelligently parse through dialects. Google has so much data around the nuances of those different dialects and languages.

“Google also at the forefront of advanced natural language processing, while the Google Assistant’s speech recognition has improved significantly over time. Google can marry NLP and advanced speech recognition with the Assistant and Google Translate.”

Google has so much data around the nuances of those different dialects and languages

But software is only one part of the equation. Everything else rests on hardware. Andy Bellavia is the director of market development at Knowles Corp, working in the hearable space. He believes the route to nirvana for live language translation comes via the hearing aid industry.

He says: “Hearing aids are primarily focused on providing the clearest speech possible. The speech processors in all the major hearing aid brands are much more sophisticated than in consumer hearables today. They really do a lot and with really low power.

Livio AI smart hearing aid promises to translate languages

“You can see the convergence between hearing aids and hearables. Traditionally, hearing aids have been focused on speech intelligibility and long battery life while hearables offered features such as smart assistants and language translation. Now the lines are blurring with hearables offering hearing enhancement and hearing aids incorporating translation, biometrics, and more.”

In the smart hearing aid industry, companies like ReSound, Starkey and Nuheara are pioneering the ability to zero-in on one voice to aid speech recognition. Motion sensors can determine which way the wearer is looking, while near and far-field microphones can close the gap between people conversing. All of this tech could be crucial in assisting live language translation systems.

More than words: Trying to replicate emotional context

However, for any hearable to emulate a human interpreter, the translation must be contextually rich. Tone, emphasis and body language convey meaning in a number of languages. Japanese, for example, has three different grammar structures to convey politeness and formality. And how on earth do we teach a machine to translate sarcasm?

Essential reading: Wearables that are trying to monitor how we feel

“The first step in natural language translation is to understand the context of the original statement and that’s going to take a machine learning algorithm to understand my emotion and intent when I say something,” Andy Bellavia adds. “Without that baseline, the translation is going to be more wooden and not contextually rich.”

Tone of voice and volume can go a long way here. Amazon is using neural networks to train Alexa to decipher emotion in speech, for example. Another possible solution could be to leverage other biometric indicators. Sensors measuring heart rate data, body temperature and sweat could all be used to help personal assistants learn the difference between our fear, delight, panic and stress. Devices like the Starkey Livio AI hearing aid already includes a heart rate sensor. It’s not outlandish to think that a raised heartrate could assist a language translation tool in identifying urgency when using the word “help” for example.

“There’s a lot of research into voice biometrics about the true breadth and depth of tone of voice and a lot of use cases will centre on deciphering sentiment,” says Dave Kemp. “The ear is such a unique point in the body for biometric connection. It’s a better place to record biometrics than your wrist, and you can get readings you cannot get on the wrist. The tympanic membrane emits body temperature, for example.”

But adding emotional context to recognised speech opens a privacy can of worms. Do consumers really want to share our sacred emotional states with tech companies (and god knows who else) in order to improve language translation? Is the juice worth the squeeze? As with other biometric tech like Face ID, data pertaining to emotional context of our speech surely has to stay securely on the device if it is to come to fruition.

Eliminating the middle man – the mobile app

Keeping everything on the device means marginalising the power of the cloud, but this can have another serious benefit for users: minimalising latency. Even with the might of Google Translate, there’s a gap in translation while the data is sent to the cloud, processed and beamed back down to the smartphone.

Essential reading: How hearables are breathing new life into hearing aids

In our review of the Pixel Buds, we concluded the translation feature worked well and speedily enough, but all it really did was move elements of the Google Translate app to the ear and saved people the hassle of passing the phone back and forth. However, ditching the app completely (something both Doppler and Bragi were working on before exiting the market) raises a number of logistical challenges.

Waverly Labs recently unveiled its Ambassador translator hearable

Accommodating additional processing power, super-powered directional microphones and biometric sensors in AirPods-sized earbuds is an incredible challenge. Especially if you want passable battery life. And let’s not ignore the obvious drawback of a standalone solution. Minus the app, how do you communicate with a person who isn’t wearing a hearable? Sharing earbuds with a stranger? No thanks.

Waverley Labs’ new prosumer Ambassador device (the successor to the Pilot) addresses the latter point somewhat with an over-ear solution that can be shared more hygienically. Travelling with a pair can enables you to communicate with anyone. The speed of translation displayed below also goes a long way to negate concerns over latency, but we’re yet to put the device to the test ourselves.

Is simultaneous translation really the holy grail for hearables?

MyManu is one of the companies pioneering live language translation through its Click range of hearables. Founder and CEO Danny Manu says overcoming technical and logistical challenges must be balanced against whether they add value to the customer.

In a pilot test at major hotel chains, MyManu found that live language translation (Babelfish-style) perhaps shouldn’t be the end goal. It tested live translation against an interpreter-style solution that translated the sentence after it was completed.

He told Wareable: “98% of users who tested it, including all of the businesses we were working with preferred the interpreter. They found simultaneous translation too confusing. The mind wasn’t able to process all of the information. With speech and translation happening at the same time people don’t know who to listen to.”

That makes sense. Our natural inclination when talking to people is to give them our full attention. To look for those body language cues and facial expressions. It’s called “engaging” in conversation for a reason, right?

A translation device can’t replicate natural conversation simultaneously, any more than it can when translating post-sentence. As lag from cloud translation and on-device options continues to decrease, that dead air will become a thing of the past.

This, not real-time simultaneous translation, could be the way forward, especially when the attainable goals in speech recognition, accuracy and context are met.

“We decided to channel our efforts to deliver something the customer wants – that can be used in a real life situation,” Manu added. “That’s instead of trying to show how clever we are by developing something that live translates as I’m actually speaking, when in reality it’s not going to be useful.”

That’s not to say MyManu is abandoning the push for advancement beyond mobile-based translation. Years of extensive R&D will yield forthcoming app and hardware updates that ensure greater accessibility, while addressing many of the challenges live language translation presents. “We’re focusing heavily on what adds value,” Manu added, while asking us to stay tuned.

The perfect simultaneous language translation hearable may remain in the realm of science fiction, but by choice. However, the path to truly functional devices with low latency, advanced speech recognition and even greater understanding of context and emotion is well within our grasp.